01 February 2024

roadmap

austria

Ready, set, DSA

The Digital Services Act ("DSA") is already applicable to the big internet players and from 17 February 2024 will finally apply to all other intermediary services too. There are many new obligations, but what does this mean practically speaking? And does the DSA even apply to your business at all? What do you need to change on your website, in your internal compliance system or in your general terms and conditions? What do you have to report to authorities? And what can happen if you fail to comply? We have you covered.

AI experiment

AI experiment

As part of our AI experiment in roadmap24, we have curated a few prompts and asked AI about this article. Take a look and find out what ChatGPT responded*:

Special provisions only applicable to very large online platforms ("VLOPs") and very large online search engines ("VLOSEs") are not considered in this article.

Reassess applicability

The DSA applies to providers of intermediary services who offer their services to users located within the EU, regardless of the provider's place of establishment. It is only applicable to (i) "mere conduit" services (e.g. internet access providers, domain registrars, DNS services), (ii) caching services (e.g. sole provision of content delivery networks, reverse proxies) and (iii) hosting services (e.g. online platforms, i.e. services enabling sharing of content online, or cloud services). The DSA is not applicable to services outside of these three categories, such as a retailer's webshop.

Furthermore, the DSA does not apply if the intermediary service is an integral part of another service that does not qualify as an intermediary service itself. The decisive factor here is whether the intermediary service offered is separable from the other service. This can be a tricky question to answer, especially in the case of remote IT services, transport, accommodation or delivery services. For example, the CJEU held that Uber's IT service was not separable from its ride-hailing service whereas Airbnb's accommodation service was.

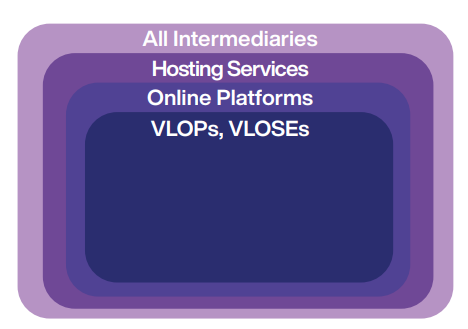

For those intermediaries that fall under the applicability of the regulation ("Providers"), the DSA provides a staggered catalogue of obligations applicable to all intermediaries, hosting services (including online platforms), online platforms, VLOPs and VLOSEs:

There are exemptions for micro and small enterprises (MSEs) with fewer than 50 employees and less than EUR 10m in annual turnover.

Revamp online presence

Providers must designate a single electronic point of contact to enable the national authorities as well as users to communicate directly with them. Those not seated within the EU must designate a sufficiently mandated legal representative in the EU. This contact information must be easily accessible, e.g. in the website imprint.

Providers must also inform users when they are talking to a chatbot and enable users to communicate directly and rapidly with a human contact in a user-friendly manner, e.g. by means of phone, e-mail or electronic contact forms.

Hosting providers must place a report button near all displayed (third-party) content, e.g. in context menus. This function must be easily accessible and user-friendly, which it is not if users must scroll to the bottom of a page or find a central reporting menu.

Obligations not applicable to MSEs:

Providers must publish (ideally on their website) a yearly, easily accessible and comprehensible transparency report on any content moderation that they engaged, containing information on the Provider's own initiative regarding content moderation, such as the use of automated tools or the measures taken to provide training to persons in charge of content moderation. Online platforms must provide additional information in these reports, such as the number of user account suspensions.

Platforms must ensure their online interfaces do not deceive, manipulate or hinder users' ability to make informed decisions. Examples of these forbidden "dark patterns" include misleading interfaces that may lead users to unintended purchases, deceptive notifications that pressure users into consenting to data collection, and hidden obstacles that make it difficult to unsubscribe or cancel services.

Ads on online platforms must be more transparent. Platforms must provide clear information about the commercial nature of content, ensuring users can easily discern between regular content and paid promotions. This includes flagging sponsored content, disclosures about targeted advertising, and identifying advertisers.

Online platforms must implement measures to ensure a high level of privacy, safety and security of minors. This includes a ban on ad targeting through profiling of minors.

Traders on B2C online marketplaces must be traceable. They will therefore be required to provide certain essential information prior to using the Provider's service, such as contact, identification and payment details.

Rethink complaint handling

Hosting providers must implement an effective process that enables any individual or entity to report illegal content, such as hate speech, child pornography or IP infringements on their service. Upon receiving such a notification, hosting providers must promptly assess the content's legality and, if found to be unlawful, take expeditious action to remove or disable access to it, or otherwise risk losing their liability privilege.

Hosting providers must disclose to the user why their content was removed and inform them about the possibilities to appeal the decision.

Obligations not applicable to MSEs:

Platform providers must implement an internal complaint-handling system so users can complain about content removal or account suspensions. They must ensure that the appeal decisions are overseen by appropriately qualified staff and not solely based on automated means.

Platforms must prioritise submissions by trusted flaggers. Trusted flaggers are entities assigned by the Digital Services Coordinator, a national authority.

Revise GTC

Generally, Providers can determine for themselves which content is permitted on their services and which is not. This principle will continue to apply under the DSA. However, Providers must now disclose information on any restrictions regarding content provided by users of their services and explain their content moderation measures and the rules of procedure of their internal complaint-handling system in their GTC. If Platform providers use a recommender system, they must disclose the main parameters used in it in their GTC and provide options for users to modify or influence those parameters.

The language used in the GTC must be easy to understand. Providers of services predominantly used by minors must pay particular attention to an appropriate description of such conditions and restrictions.

Providers must inform their users of any significant change to their GTC, e.g. changes that could directly affect users' ability to use the service, "through appropriate means". While an explicit reference to the changes by e-mail will satisfy this requirement, a simple change to the text on the website will not.

When drafting these clauses, Providers should always keep in mind that they will in general also be subject to clause control mechanisms under national law, which is especially relevant for Providers that conclude contracts with consumers.

Report to authorities

After receiving an authority order to act against specific illegal content or an order to provide specific information about a user, Providers must inform the relevant authority without undue delay if and when they complied with the order.

Hosting providers must promptly alert law enforcement if they become aware of any information giving rise to a suspicion that a criminal offence involving a threat to the life or safety of a person has taken place, is (likely) taking place or planned.

Remember the consequences

Violating the DSA obligations can result in civil liability and/or administrative fines. These fines can amount to up to 6 % of the Provider's annual worldwide turnover. Moreover, users may have a claim for compensation in respect of any damage or loss suffered due to an infringement by Providers of their obligations.

authors: Roland Vesenmayer, Valentin Demschik

Roland

Vesenmayer

Associate

austria vienna

AI experiment

* The AI add-on to this article ..

... has been curated by our legal tech team prior to publication.

... has been compiled by AI. Its results may not accurately reflect the original content or meaning of the article.

... aims to explore AI possibilities for our legal content.

... functions as a testing pilot for further AI projects.

... has legal small print: This AI add-on does not provide and should not be treated as a substitute for obtaining specific advice relating to legal, regulatory, commercial, financial, audit and/or tax matters. You should not rely on any of its outputs as (formal) legal advice. Schoenherr does not accept any liability to any person who does rely on the content as (formal) legal advice.